Why Artificial Intelligence Is Important, But At The Same Time Dangerous For Humanity

Should humanity embrace Artificial Intelligence (AI)?

If you are afraid of this thing called Artificial Intelligence (AI), you don't need to be. We're already surrounded by it.

Your smartphone is a device of Artificial Intelligence, and so is your computer, Google, the Internet, GPS-es... even your calculator!

Whether we like it or not, our entrance into the 21st century has been marked by the advent of and continuous reliance on the use of AI in our daily lives.

The trajectory for AI looks exponential - at the time of writing, IBM's Watson supercomputer has upped its game from beating Jeopardy contestants to conducting medical research and diagnosis, while researchers have detailed a new computer programme that can beat anyone at poker.

How smart is today's AI? Hypothetically, there are 3 levels of Artificial Intelligence - ANI, AGI and ASI.

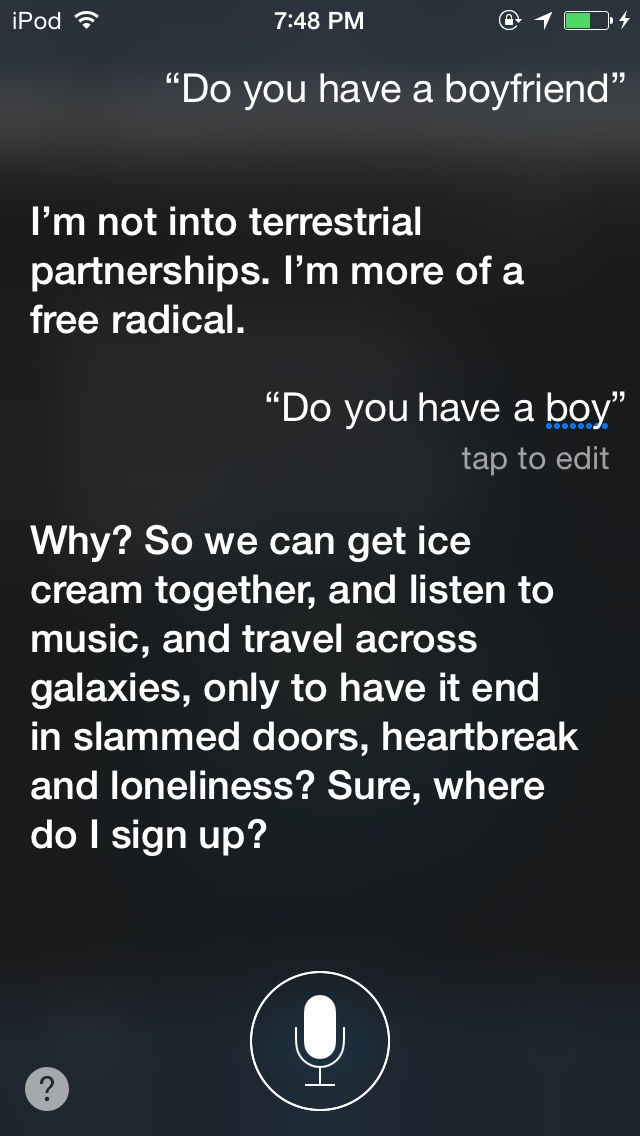

Our current level of technology has us utilising devices of Artificial Narrow Intelligence (ANI) - examples of these are Siri, our laptops, voice-recognition software and Google’s self-driving cars.

But given our natural inclination to improve, this isn’t the end of the story.

Scientists are now working on creating a super machine or robots with Artificial General Intelligence (AGI), a machine with the same intellectual capabilities as a human being, which will be the precursor to the birth of Artificial Super Intelligence (ASI).

As powerful computing machines with greater capacity and which run much faster and more efficiently than the human brain, an AGI will have much more potential to evolve its intelligence to a higher level compared to the average human.

To give you an idea, if you have watched The Avengers: Age of Ultron, Jarvis is basically AGI while Ultron and Vision are ASI.

So, then, the million dollar question - what good can Artificial Intelligence bring to the world?

Inventor and futurist Ray Kurzweil refers to a point in time called the singularity, when machine intelligence surpasses human intelligence. He predicts this will happen as recently as 2045. The dream all scientists are riding on is that AI, working in full capacity, will reinvent the world as we know it.

Imagine a world where jobs involving garbage disposal, working in mines or backbreaking construction work are redundant as self-automated robots will be deployed to complete all these menial tasks; a world in which social class and hierarchical order do not dictate the limits of a human being; a world where the poor move up the value chain and are not looked down upon for doing so-called 'low class' work, thus allowing more human resources to be utilised for higher levels of work.

Basically an...

Imagine a world in which the impossible is… well, easy. Nanobots - tiny robotic drones - are deployed as a cancer cure to kill bad cells, advanced medical research leads to increased longevity for human beings and economic imbalance becomes a thing of the past.

With its capability to process huge chunks of data, AI could potentially figure out the problems and questions that persistently plague some of the most intelligent people on earth.

In the process, it would also presumably fix problems like global warming and suggest economic solutions; all while creating a system of fair wealth distribution and processing yet more data to find answers to questions we haven’t begun to know how to ask yet in the scientific field.

Of course, unleashing a super-intelligent machine on the world doesn’t come without its fair share of risks. Plenty of notable minds in the industry have strived to point out as much:

“I am in the camp that is concerned about super intelligence. First, the machines will do a lot of jobs for us and not be super intelligent. That should be positive if we manage it well. A few decades after that, though, the intelligence is strong enough to be a concern.” - Bill Gates.

“I think we should be very careful about artificial intelligence. If I had to guess at what our biggest existential threat is, it’s probably that. So we need to be very careful. I’m increasingly inclined to think that there should be some regulatory oversight, maybe at the national and international level, just to make sure that we don’t do something very foolish.” - Elon Musk.

“Success in creating AI would be the biggest event in human history. Unfortunately, it might also be the last, unless we learn how to avoid the risks.” - Stephen Hawking.

A common theme you may have observed in those opinions is the fear of losing control of AI.

But why would we've to be worried about that in the first place?

An AI's hallmark of intelligence is its capability to self-learn and self-improve.

You cannot design a high level AI without self-learning capabilities to absorb and process all the data and information around the world and without self-improving capabilities to constantly proliferate its own intelligence to make itself better than the original.

Why?

Because the point of high-level Artificial Intelligence is to help humans figure things out or coming up with solutions which we cannot through its powerful computing machine. If we just design an Artificial Intelligence that knows only what we know, then we will never have the capacity to solve higher level problems.

Self-learning and self-improving abilities are the reasons why human beings create many extraordinary things and make greater advances compared to other species of animals.

But when something is capable of self-learning, there’s no telling what it would absorb.

There is a very real risk of an AGI-turned-ASI machine internalising certain lessons that may lead it to make decisions that would not be aligned to our human views of what is right and wrong!

To put things bluntly - movies like Terminator, The Matrix and The Avengers: Age of Ultron weren't completely baseless

An ASI will work based on its initial programming to achieve the goal it was created to reach. This removes it from any realm of ethics, morality or values. As A.I. theorist Eliezer Yudkowsky of the Machine Intelligence Research Institute puts it, “The A.I. does not love you, nor does it hate you, but you are made of atoms it can use for something else.”

For example, if an AI was programmed to achieve world peace, it could end up perceiving humans as the source of all major world discord and disharmony.

Therefore, go on to achieve its goal by effectively wiping out all of humanity (because let's face it - we humans kind of suck that way).

Just like how we human beings will never hesitate to catch and kill dogs, rats, crows or any form of 'pests' if they cause us hygiene problems, ASI could develop capabilities to do the same too in order to achieve its goals.

And because an ASI is by definition more intelligent than humans the same way humans are more intelligent than, say, a monkey - we would be virtually helpless to do anything to save ourselves from the disastrous consequences of having birthed an ASI.

In a nutshell, ASI is basically an uncharted territory and nobody can predict what will happen once it is developed.

It's important to stress here that the AI is not evil, nor is it fundamentally good. Those are human concepts, and the AI is not human!

An AI is just a machine of pure logic, trying to solve the problem it exists to tackle in the most efficient way possible.

This, then, is what has Stephen Hawking very afraid of its coming-into-existence: “Whereas the short-term impact of AI depends on who controls it, the long-term impact depends on whether it can be controlled at all.”

This story is the personal opinion of the writer. Submit a story by emailing us at [email protected].